Logstash, Meet Sentinel… Sentinel, Meet Logstash!.

Background

In both our free workshop and popular Defending Enterprises training we heavily utilise Elastic’s Winlogbeat, Auditbeat, Filebeat and Packetbeat agents. In past editions this data finally ended up in an Elastic backend which was accessed using Kibana. A common setup that works well.

Since the release of Microsoft Sentinel back in 2019 there have been many improvements, additions and, as you’d expect from Microsoft, name changes! A large number of businesses are now utilising this powerful SOAR, and in mid 2021 we also migrated to this platform.

Part 1 of this series details how to send data from an Elastic agent > Elastic Logstash > Microsoft Sentinel. In upcoming posts we’ll build on this configuration to further tune this setup.

The Microsoft Environment

First, we need to create a Log Analytics workspace. This is used by Microsoft Sentinel and it’ll be where our Logstash server sends its data.

- Log onto the Azure Portal and create a new Log Analyitics workspace

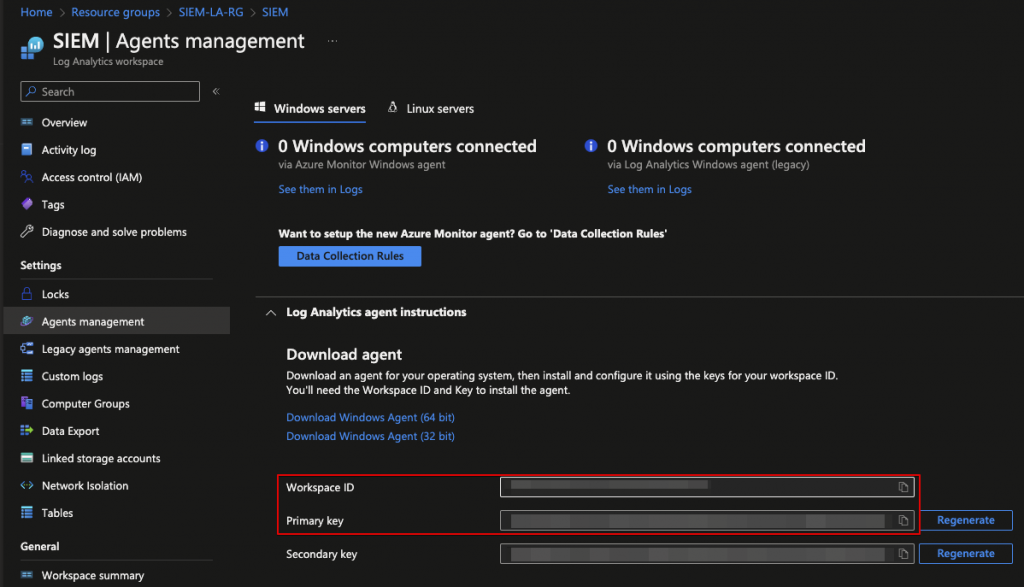

- Navigate to the Log Analytics workspace and select“Agents Management” from the menu, then “Log Analytics agent instructions”

- Make a note of the Workspace ID and Primary Key values as these will shortly be required

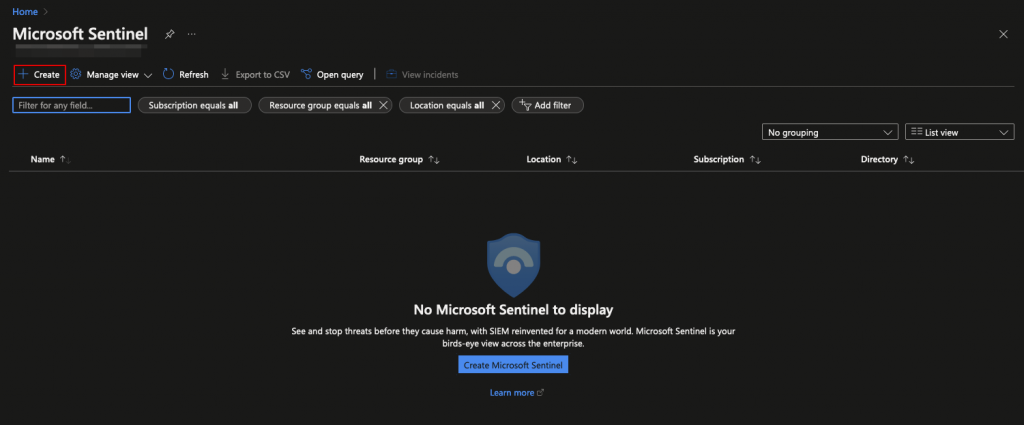

- Open Microsoft Sentinel, select “Create” from the drop-down list and select the Log Analytics Workspace you previously created

Now to setup the Logstash server…

Elastic Logstash

Elastics’ blurb on Logstash summarises the product nicely;

“Logstash is a free and open server-side data processing pipeline that ingests data from a multitude of sources, transforms it, and then sends it to your favourite stash“

Elastic Logstash

We’re not going into depth regarding setup within this post, as things are likely to change in the future and it’s always best to consult the official documentation when configuring a new product. That said, the basic setup process is outlined below and at the time of writing version 8.5.0 of Logstash, Winlogbeat and the various Elastic tools were used in this guide.

- Install Logstash (Elastic’s official guide)

- Install the Microsoft Sentinel > Logstash output plugin

- Note; this is, and has been in the development stage for quite some time

sudo /usr/share/logstash/bin/logstash-plugin install microsoft-logstash-output-azure-loganalytics- Configure TLS

- As per the Elastic guide download the Elastic search binaries, extract the contents and use elasticsearch-certutil to generate the CA certificate.

elasticsearch-8.5.0/bin/elasticsearch-certutil ca -pem- Create and convert client certificates

- In this example certificates are created and stored at /etc/logstash/certs/ on the Logstash server and “C:\Program Files\Elastic\Beats\Certs\” on the Windows hosts.

elasticsearch-8.5.0/bin/elasticsearch-certutil cert --name logstash-demo --ca-cert /etc/logstash/certs/ca.crt --ca-key /ca/ca.key --dns logstash-demo.insecurity.demo --ip 10.2.0.6 --pem

openssl pkcs8 -inform PEM -in logstash-demo.key -topk8 -nocrypt -outform PEM -out logstash-demo.pkcs8.key- Repeat the above step for each workstation/server in the environment that will need to communicate with Logstash.

The following snippet gives an a preview of the configuration used within this test environment. This file was created and resides at /etc/logstash/conf.d/ms.conf on the Logstash server.

You’ll see that no filters have been defined (a filter means we want to do something with, or further process the data) as this is going to be reviewed a later post.

input {

beats {

port => 5044

ssl => true

ssl_certificate_authorities => ["/etc/logstash/certs/ca.crt"]

ssl_certificate => "/etc/logstash/certs/logstash-demo.crt"

ssl_key => "/etc/logstash/certs/logstash-demo.pkcs8.key"

ssl_verify_mode => "force_peer"

}

}

output {

microsoft-logstash-output-azure-loganalytics {

workspace_id => "<WORKSPACE_ID>"

workspace_key => "<WORKSPACE_KEY>"

custom_log_table_name => "SIEM"

time_generated_field => "@timestamp"

plugin_flush_interval => 5

amount_resizing => false

max_items => 5000

}

}Now to get some data ingested from clients…

Elastic Winlogbeat

In a nutshell, Winlogbeat is an Elastic agent that ships off Windows event logs to an elasticsearch database or, as in this example, Logstash.

- Download the Winlogbeat zip from Elastic

- Extract the Winlogbeat ZIP. In this demo environment, all Winlogbeat files reside at “C:\Program Files\Elastic\Beats\Winlogbeat\”

- Ensure Winlogbeat sends data to Logstash instead of the default elasticsearch database. Our minimised demo Winlogbeat.yml configuration is shown below and resides at “C:\Program Files\Elastic\Beats\Winlogbeat\winlogbeat.yml” on the Windows server.

winlogbeat.event_logs:

- name: System

- name: Security

- name: Microsoft-Windows-Sysmon/Operational

output.logstash:

hosts: ["10.2.0.6:5044"]

ssl.certificate_authorities: ["C:\\Program Files\\Elastic\\Beats\\Certs\\ca.crt"]

ssl.certificate: "C:\\Program Files\\Elastic\\Beats\\Certs\\vm400.crt"

ssl.key: "C:\\Program Files\\Elastic\\Beats\\Certs\\vm400.key"- Test the configuration and check for any errors

"C:\Program Files\Elastic\Beats\Winlogbeat\winlogbeat.exe" test config -c "C:\Program Files\Elastic\Beats\Winlogbeat\winlogbeat.yml" -e- Install and start the Winlogbeat service

.\install-service-winlogbeat.ps1

Get-Service -Name winlogbeat | Start-Service The Data Flows…

OK, we’re setup and ready to see that data!

Return to the Logstash server and start the Logstash service.

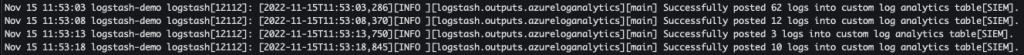

sudo systemctl start logstash.serviceBy tailing the Syslog file you should see events being posted to the Log Analytics Table “SIEM” (or the custom name you gave in the Logstash configuration). Do note that it could take ~5 minutes for the data to appear in Microsoft Sentinel.

tail -f /var/log/syslog

Log onto Microsoft Sentinel and verify that data is available.

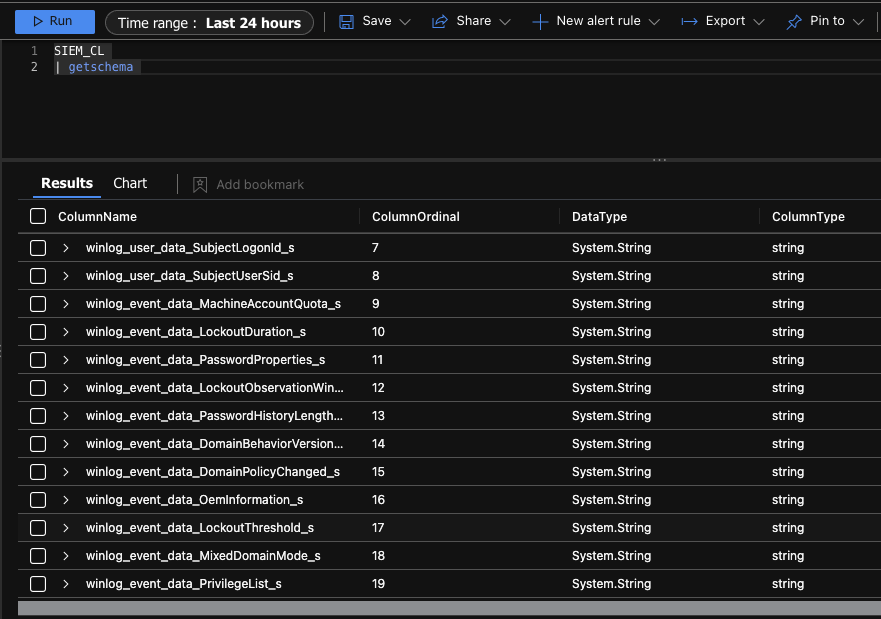

You can view schema information and available fields by running the following KQL query (note that _CL “Custom Logs” is added to the end of the table name by Log Analytics).

SIEM_CL

| getschema

Run a few queries to verify that data is being logged correctly.

The Elastic Winlogbeat field names take some getting used to and can seem a little (very) obscure – take a look at the official Winlogbeat documentation for some hints on what data resides where.

Also note that a “.” in the field name will be replaced by “_”, as well as “_s” (appended to string values) in Log Analytics Custom Tables, so the field winlog.computer_name becomes winlog_computer_name_s)

That’s it for this initial post! We’ll build on, improve and utilise further functionality in both Logstash and Microsoft Sentinel in upcoming segments.

- Hashcatalyst: Automating password cracking

- Kerberoasting Under Fire: How Group Managed Service Accounts Can Save the Day

- From Shadows to Signals: Hunting Pass-the-Hash Attacks

- NTLM is (finally) Dying

- From File Deletion to Domination: Exploiting Cisco’s VPN Clients for Privilege Escalation

About In.security.

In.security was formed by Will and Owen, two cyber security specialists driven to help other organisations stay safe and secure against cyber threats and attacks. After having worked together since 2011 in several former companies, they each gained considerable experience in system/network administration, digital forensics, penetration testing plus training. Based in Cambridgeshire, but operating nationally, we can provide a range of services and training for businesses and individuals alike. Read more about our services below:

- Penetration testing

- Vulnerability assessments

- Build reviews

- Red team testing

- Phishing assessments

- Password auditing

- Cloud security auditing